NVIDIA GTC 2026: Live Updates on What’s Next in AI

Artificial intelligence is moving from simple, prompt-based tools to intelligent, long-running systems that reason, plan and act. These autonomous agents don’t just generate text. They can write code, call tools, analyze data, simulate outcomes and continuously improve.

To build and run always-on agents with supercomputing-intelligence, developers need the right infrastructure.

Pairing NVIDIA DGX Spark and NVIDIA DGX Station systems with the new NVIDIA NemoClaw open source stack provides the ultimate platform for locally developing and deploying autonomous, long-running agents, aka claws. The systems bring AI-factory-class performance directly to where intelligence is created — at the desk and inside the enterprise.

Securing Agentic AI With NemoClaw

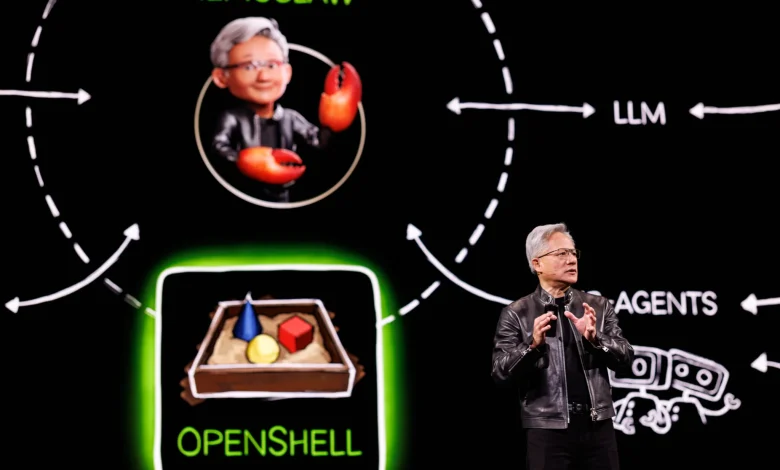

NVIDIA NemoClaw is an open source stack that simplifies running always-on OpenClaw assistants — more safely and with a single command. It installs the NVIDIA OpenShell runtime, part of the NVIDIA Agent Toolkit, a secure environment for running autonomous agents, and open source models like NVIDIA Nemotron.

Enterprises deploying autonomous agents across proprietary workflows need governance, isolation and control. OpenShell defines how agents access data, use tools and operate within policy boundaries, providing the architectural foundation for secure, always-on AI systems.

Self-evolving agents need dedicated computing to build tools and software as they complete tasks autonomously. DGX Spark and DGX Station are ideal environments for running NemoClaw to build and validate agents with OpenClaw locally before scaling to data center AI factories.

DGX Spark: Scalable AI for Enterprise Teams

While DGX Station represents the most powerful deskside AI system, DGX Spark brings scalable AI infrastructure to domain teams across the enterprise.

With large local memory, strong performance and integration with NemoClaw, DGX Spark is ideal for autonomous agent development and deployment.

DGX Spark now supports clustering up to four systems in a unified configuration, creating a compact “desktop data center” with up to linear performance scaling — without the complexity of traditional rack deployments.

An upcoming software release for DGX Spark is expected to further strengthen orchestration and manageability, enabling faster iteration and smoother transitions from prototype to production.

DGX Spark supports the latest AI models, including NVIDIA Nemotron 3 and leading open models, ensuring developers can build on a modern, continuously evolving AI software stack.

Across industries, organizations are moving DGX Spark from evaluation environments into active enterprise deployment. Financial institutions are accelerating risk modeling. Healthcare researchers are compressing discovery timelines. Energy companies are optimizing operations. Media and telecommunications teams are building real-time content and communications workflows.

DGX Station: Data-Center-Class AI at the Desk

NVIDIA DGX Station — the world’s most powerful deskside supercomputer — has arrived for this new phase of long-thinking, autonomous thinking.

Powered by the NVIDIA GB300 Grace Blackwell Ultra Desktop Superchip, DGX Station provides 748 gigabytes of coherent memory and up to 20 petaflops of AI compute performance. It connects a 72-core NVIDIA Grace CPU and NVIDIA Blackwell Ultra GPU through NVIDIA NVLink-C2C, creating a unified, high-bandwidth architecture built for frontier AI workloads.

It enables developers to run open models of up to 1 trillion parameters and to develop long-thinking autonomous agents directly from their desks.

DGX Station can operate as a personal AI supercomputer or as a shared, on-demand compute node for teams. It supports air-gapped configurations, making it well suited for regulated industries and sovereign environments. Applications developed locally can move seamlessly to NVIDIA GB300 NVL72 systems in the data center or cloud without rearchitecting.

Industry leaders are already harnessing DGX Station to accelerate real-world innovation. Snowflake is using DGX Station to locally test its open source Arctic training framework. EPRI is using and testing it to advance AI-powered weather forecasting to strengthen grid reliability. Medivis is integrating vision language models with DGX Station into surgical workflows. Sungkyunkwan University is using the system to accelerate protein structure analysis. Microsoft Research and Cornell University are tapping DGX Station, enabling hands-on AI training at scale. Respo.Vision, WSP and 1X are deploying AI for advanced sports analytics, synthetic data training, autonomous agents and humanoid robotics.

Systems are available to order now and will begin shipping in the coming months from ASUS, Dell Technologies, GIGABYTE, MSI and Supermicro, and later in the year from HP.

One Architecture — From Desktop to AI Factory

DGX Station and DGX Spark ship preconfigured with the NVIDIA AI software stack, enabling developers to use familiar tools and move seamlessly between local development and large-scale infrastructure.

Developers can run and fine-tune state-of-the-art models on DGX Station — including OpenAI gpt-oss-120b, Google Gemma 3, Qwen3, Kimi K2.5, Mistral Large 3, DeepSeek V3.2 and NVIDIA Nemotron — and tap into a wide variety of familiar tools and platforms from 1x, Aible AI, Anaconda, Docker, Red Hat, JetBrains, Docker, Inc., Ollama, llama.cpp, ComfyUI, LM Studio, Llm.c, Weights & Biases (acquired by CoreWeave), Odyssey, Roboflow, VLLM, SGLang, Unsloth, Learning Machine, Quali, Lightning AI and more.

By unifying chips, systems, networking and software into a coherent architecture, NVIDIA enables enterprises to build once and scale everywhere — from a deskside DGX Station to multi-node DGX Spark clusters to full AI factories.

Learn more about DGX Spark at NVIDIA GTC. Attend sessions on scalable autonomous agents and AI infrastructure, see live NemoClaw-enabled AI agents and multi-node Spark demos in the exhibit hall, or visit this webpage to access deployment guides and beta resources.

Developers can get started quickly on DGX Station and test a large sample of AI workflows. Learn more and order a DGX Station from an NVIDIA partner.

See notice regarding software product information.

Back to menu