Nvidia-Groq Deal Validates AI Chip Startup Landscape

//php echo do_shortcode(‘[responsivevoice_button voice=”US English Male” buttontext=”Listen to Post”]’) ?>

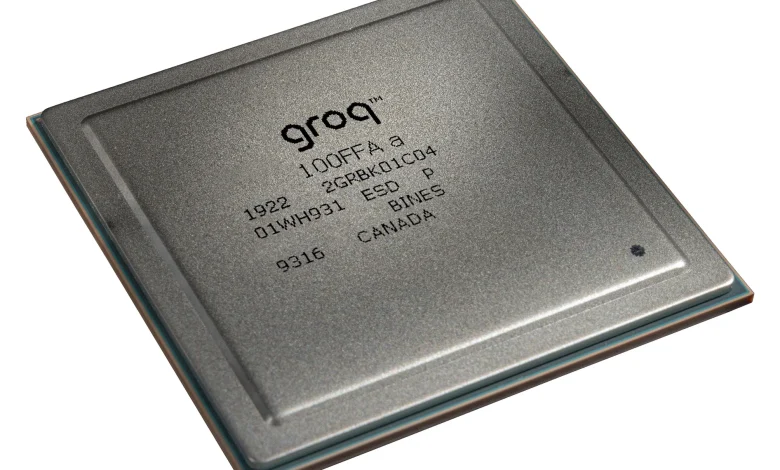

Fallout from Nvidia’s deal with Groq, in which the GPU giant reportedly paid $20 billion for a non-exclusive license to Groq’s technology and hired most of its technical team, has had two big ramifications across the AI chip industry.

First, if we assume Nvidia all-but acquired Groq for its technology (rather than for commercial reasons), it validates that there’s absolutely a market for non-GPU accelerators at scale.

AI chip companies have gone after fast, single-user token speeds and time to first token since it became clear that this is a GPU’s Achilles heel. Groq and Cerebras have long been able to beat Nvidia on single-user token speed, but with insufficient interest from the market in buying non-GPU hardware at that time, the companies had to build out their own infrastructure to prove it.

Nvidia’s all-but acquisition of Groq suggests Nvidia is admitting the existence of this Achilles heel. We’ll have to wait until GTC later this month to find out more about what Nvidia is planning, but industry rumors suggest Groq’s hardware IP could be used to avoid a bottleneck in part of the decode portion of the LLM inference workload.

By Bluetooth SIG 03.10.2026

By GigaDevice 03.10.2026

By GigaDevice 03.10.2026

“What we’ll do is we’ll extend our architecture with Groq as an accelerator, in very much the way that we extended Nvidia’s architecture with Mellanox,” Nvidia CEO Jensen Huang said during the company’s earnings call last week.

Secondly, the reported price tag of $20 billion for this deal puts a dollar value on fast inference architectures, which is no doubt making a lot of AI chip startup CEOs, and their investors, very happy right now.

Since the Nvidia-Groq deal was announced:

- Cerebras landed a deal worth $10B with OpenAI, closed a $1-B series H with a post-money valuation of $23B, and is reportedly preparing to re-file its IPO paperwork.

- SambaNova nixed its offer of acquisition from Intel, for reportedly just $1.6B, in favour of a $350-million series E.

- Etched announced a $500-million at a $5-B valuation.

- Neurophos raised a $110-million series A.

- Stealthy British photonic AI chip startup Olix has reportedly raised $220 million.

- And that’s just in the last eight weeks.

Sandra Rivera (Source: Vsora)

Nvidia’s deal with Groq is great news for other chip startups, Sandra Rivera, chair of the board at AI chip startup Vsora, told EE Times during a recent interview.

“Through the Groq licensing deal, [Nvidia] has acknowledged that [inference] is not a one-size-fits-all, it just isn’t,” Rivera said. “You really do need a different architectural approach for different parts of the workload, and I think that that probably is the strongest validation that there will be a heterogeneous set of architectures being deployed.”

Vsora has developed a data center inference product with relatively large HBM capacity (eight stacks of HBM3); part of Rivera’s responsibilities in her new position as board chair will include supporting short-term fundraising activities, she said.

“Here in Silicon Valley, the market is very frothy,” Rivera said. “There’s a lot of investment going into many, many companies, valuations are growing quite substantially, and it’s because there’s still such an opportunity…there’s just such a level of optimism…we’re still in the early days and there’s a lot of growth in the days, months and years ahead.”

A recent conversation with SambaNova CEO Rodrigo Liang confirmed the Nvidia-Groq deal is being seen as a win for all AI chip startups.

“The signal is very clear that traditional GPUs can’t compete in the inference market,” Liang told EE Times. “The biggest risk to Nvidia is they get put in a niche as a training solution.”

Rodrigo Liang (Source: SambaNova)

As well as speed, per-rack power and per-rack power efficiency for inference are crucial to service providers’ economics but represent another weak point for GPU architectures, Liang said.

OpenAI’s eagerness to use non-GPUs for inference is a signal that inference is the real battleground, Liang said, but in most cases, the economics of those other architectures still remains to be proven.

SambaNova had been working on raising funding before the Nvidia-Groq news broke, Liang said, noting there nevertheless seems to be “a new appetite for investing in chips…Nvidia’s numbers continue to be impressive, which shows there’s insatiable demand,” he said.

The eventual inference chip market will have multiple players, albeit with a gap between the leader and the followers, he added.

The Nvidia-Groq deal threw the spotlight onto low-latency inference as a category, D-Matrix CEO Sid Sheth told EE Times at Web Summit Qatar recently.

“As we go deeper into the age of inference, it’s not going to be a one-size-fits-all, it’s not going to be all GPUs,” Sheth said. “The Nvidia-Groq deal clearly outlined that, and there is this whole low-latency inference category that is now beginning to emerge. That is not a GPU-only category.”

Anything that can improve user experience will be popular in the market, Sheth said.

Sid Sheth (Source: D-Matrix)

“As soon as low-latency options became available, people tried it and new use cases emerged and before you knew it, Nvidia was behind the curve, and then they had to act – their hand was forced,” he said.

Industry trends for disaggregated inference (running prefill and decode parts of the workload on different types of GPUs) will continue, and the same thesis extends to non-GPU solutions, which can work alongside GPUs to accelerate specific chunks of workload.

“We saw so many different constraints to optimize for inference, we felt like there’s no way a single solution can address all of those,” Sheth said. “There’s big models, small models, throughput, latency, cost, energy, and on top of that there’s availability. You have all these different requirements and to expect that you could brute force all of that with a single GPU, I don’t know how that works.”

The $20B price tag on Groq certainly calmed the market, he said.

“It’s good for the whole world that there’s not just one solution that can serve everyone’s needs,” Sheth said. “There will be other winners here.”