Should you really trust health advice from an AI chatbot?

Researchers are starting to unpick the strengths and weaknesses of chatbots.

The Reasoning with Machines Laboratory at the University of Oxford got a team of doctors to create detailed, realistic scenarios that ranged from mild health issues you could deal with at home; through to needing a routine GP appointment, an A&E trip, or requiring calling an ambulance.

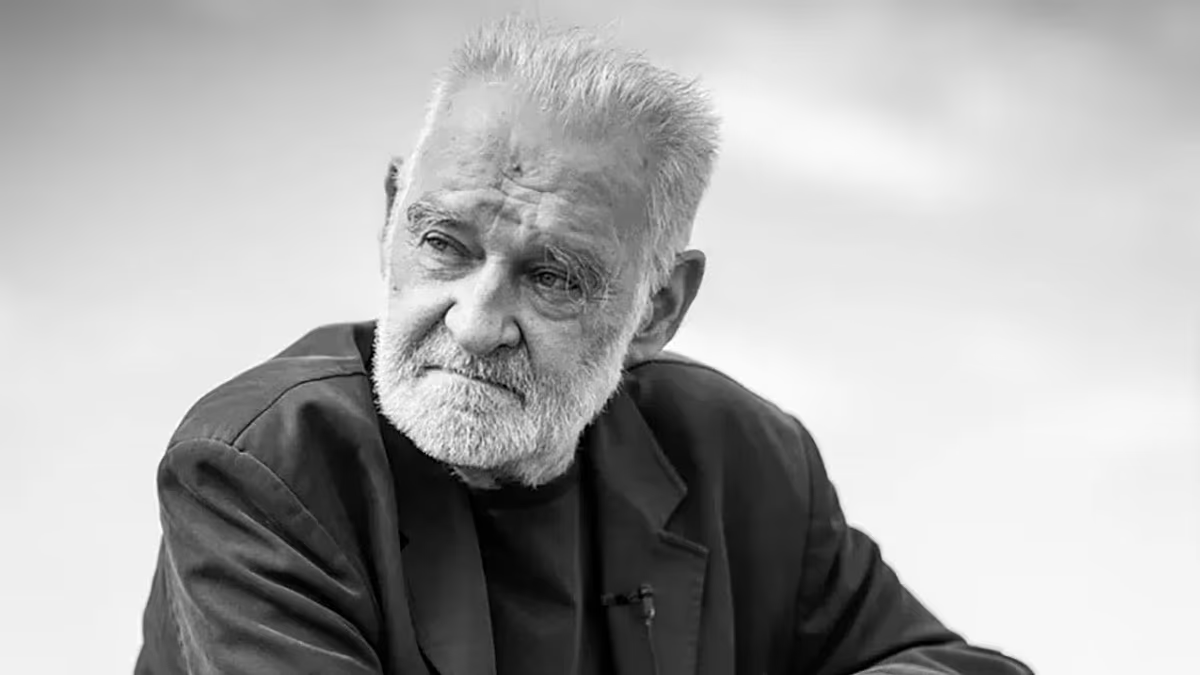

When the chatbots were given the complete picture they were 95% accurate. “They were amazing, actually, nearly perfect,” researcher Prof Adam Mahdi tells me.

But it was a very different story when 1,300 people were given a scenario to have a a conversation with a chatbot about in order to get a diagnosis and advice.

It was the human-AI interaction that made things unravel as the accuracy fell to 35%, external – two thirds of the time people were getting the wrong diagnosis or care.

Mahdi told me: “When people talk, they share information gradually, they leave things out and they get distracted.”

One scenario described the symptoms of a stroke causing bleeding on the brain called a subarachnoid haemorrhage. This is a life-threatening emergency that requires urgent hospital treatment.

But as you can see, subtle differences in how people described those symptoms to ChatGPT led to wildly different advice.