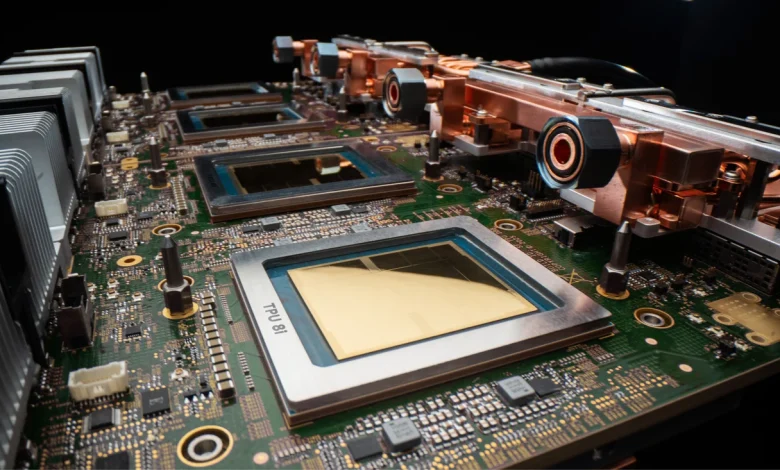

Our eighth generation TPUs: two chips for the agentic era

Co-designed for Gemini, open for everyone

This eighth generation TPU is also the latest expression of our co-design philosophy, where every spec is built to solve AI’s biggest hurdles.

- Boardfly topology was designed specifically for the communication demands of today’s most capable reasoning models.

- SRAM capacity in TPU 8i was sized for the KV cache footprint of reasoning models at production scale.

- Virgo Network fabric’s bandwidth targets were derived from the parallelism requirements of trillion-parameter training.

And for the first time, both chips run on Google’s own Axion ARM-based CPU host, allowing us to optimize the full system, not just the chip, for performance and efficiency.

Both platforms support native JAX, MaxText, PyTorch, SGLang and vLLM — the frameworks developers already use — and offer bare metal access, giving customers direct hardware access without the overhead of virtualization. Open-source contributions including MaxText reference implementations and Tunix for reinforcement learning support turn key paths between capability and production deployment.

Designing for power efficiency at scale

In today’s data centers, power, not just chip supply, is a binding constraint. To solve this, we have optimized efficiency across the entire stack, with integrated power management that dynamically adjusts the power draw based on real-time demand. TPU 8t and TPU 8i deliver up to two times better performance-per-watt over the previous generation, Ironwood.

But efficiency at Google is not just a chip-level metric; it’s also a system-level commitment that runs from silicon to the data center. For example, we integrate network connectivity with compute on the same chip, significantly reducing the power costs of moving data across the TPU pod. Even our data centers are co-designed with our TPUs. We innovated across hardware and software to enable our data centers to deliver six times more computing power per unit of electricity than they did just five years ago.

TPU 8t and TPU 8i continue that trajectory. Both are supported by our fourth-generation liquid cooling technology that sustains performance densities air cooling cannot. By owning the full stack, from Axion host to accelerator, we can optimize system-level energy efficiency in ways that simply cannot be achieved when the host and chip are designed independently.